A kind reader, Baruch Even, has pointed out my ignorance with SATA Native Command Queuing (NCQ) working with Solid State Drives (SSDs) in my previous blog.

In the post, I have haphazardly stated that NCQ was meant for spinning mechanical drives. I was wrong.

NCQ does indeed improve the performance of SSDs using SATA interfaces, and sometimes as much as 15-20%. I know there is a statement in the SATA Wikipedia page that says that NCQ boosted IOPS by 100% but I would take a much more realistic view of things rather than setting the expectations too high.

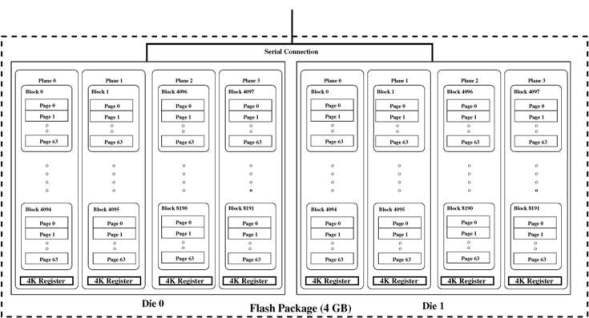

The typical SSD consists of flash storage spread across multiple chips, which in turn are a bunch of flash packages. Within each of the flash packages, there are different dies (as in manufacturing terminology “die”, not related to the word of “death”) that houses planes (not related to aeroplanes) and subsequently into blocks and pages.

The diagram below (found at the Usenix link of https://www.usenix.org/legacy/event/usenix08/tech/full_papers/agrawal/agrawal_html/) describes an overview understanding of the internal organs of the SSDs in the form of a Flash package.

At the upper layers of the OS and file system, the logical reads and writes are mapped to physical addressing of the solid state drive. The Flash Translation Layer (FTL), shown in the diagram below is responsible for the logical to physical mapping and also makes the SSD look like a logical drive to the upper layers.

As read and write requests are passed to the SATA controller, they are consolidated into the queue. The NCQ function of the SATA controller will then decide the optimized order to dispense I/O. The Pipeline rescheduling algorithm used by NCQ controls how I/O is spread across the dies in the flash package, because read and write I/Os cannot be executed on the same die simulataneously.

In the diagram below, the pipeline rescheduling algorithm is compared to the more sequential-like multiplane rescheduling algorithm while pushing I/O to the flash die.

(The diagram is the courtesy of the IEEE Computer Architecture Letters Vol 9 No 1 Jan-Jun 2010)In short, NCQ induces I/O parallelism to take advantage of the speed and parallel processing powers of solid state drives.

In ignorance, I am glad to make this mistake. The generosity of our reader, Baruch, has made this possible. This is a perfect example of the collective power of community, and a perfect opportunity to learn deeper and better.

Thank you